AI in Psychological Assessment: The integration of Artificial Intelligence (AI) into psychological assessment is transforming the way mental health professionals evaluate, diagnose, and monitor individuals. Traditionally, psychological assessments relied heavily on human judgment, standardized tests, and face-to-face interactions. Today, AI-driven tools are enhancing these processes by offering faster analysis, predictive insights, and scalable solutions.

From analyzing speech patterns and facial expressions to interpreting behavioral data, AI has introduced a new level of precision and efficiency. However, this technological advancement also raises significant ethical concerns. Questions about privacy, bias, transparency, and human oversight are at the forefront of discussions surrounding AI in psychology.

This article explores the ethical challenges associated with AI integration in psychological assessment and outlines strategic responses to ensure responsible and effective use.

The Role of AI in Psychological Assessment

AI technologies are increasingly being used to support various aspects of psychological evaluation. These systems analyze large datasets to identify patterns that may not be easily detectable by humans.

Data-Driven Insights

AI systems can process vast amounts of data, including patient histories, behavioral patterns, and even social media activity. This allows for more comprehensive assessments and early detection of mental health conditions.

Predictive Analytics

Machine learning models can predict the likelihood of certain psychological conditions based on historical data. This helps clinicians intervene early and develop preventive strategies.

Automation of Routine Tasks

AI tools can automate administrative tasks such as scoring tests, generating reports, and scheduling appointments. This allows mental health professionals to focus more on patient care.

Remote and Accessible Care

AI-powered platforms enable remote psychological assessments, making mental health services more accessible, especially in underserved areas.

While these benefits are significant, they must be balanced against the ethical implications of using AI in such a sensitive domain.

Ethical Challenges in AI-Based Psychological Assessment

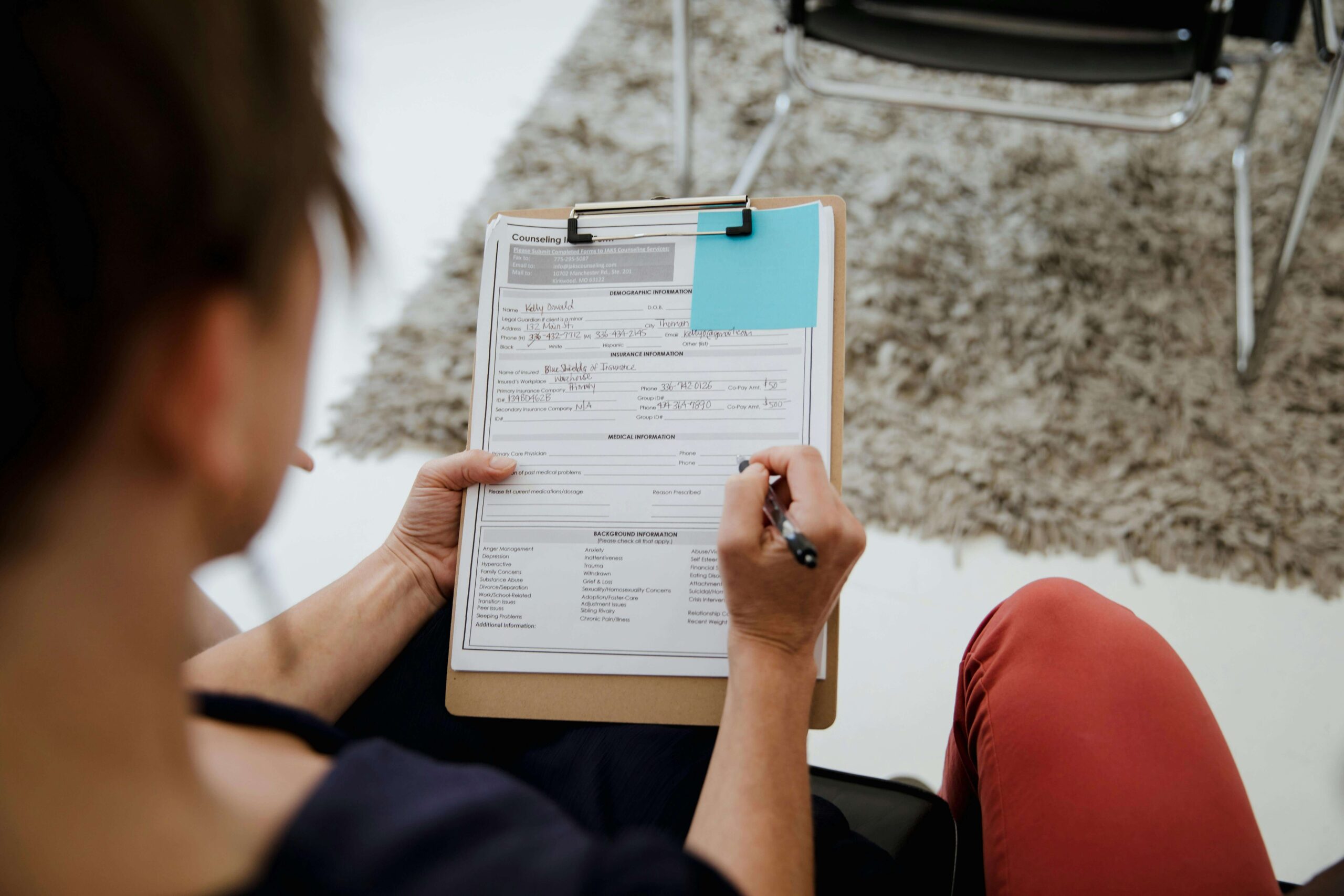

1. Data Privacy and Confidentiality

Psychological data is highly sensitive, often involving personal thoughts, emotions, and behaviors. The use of AI requires collecting and storing large amounts of this data, raising concerns about privacy and confidentiality.

Unauthorized access or data breaches can have severe consequences for individuals. Ensuring secure data storage and strict access controls is essential.

2. Bias and Fairness

AI systems are only as good as the data they are trained on. If the training data contains biases, the AI system may produce biased outcomes. This can lead to unfair assessments and discrimination against certain groups.

For example, cultural differences in behavior and expression may be misinterpreted by AI systems, resulting in inaccurate diagnoses.

3. Lack of Transparency

Many AI models, particularly deep learning systems, operate as “black boxes,” making it difficult to understand how decisions are made. In psychological assessment, this lack of transparency can undermine trust and accountability.

Patients and practitioners need clear explanations of how AI systems arrive at their conclusions.

4. Over-Reliance on Technology

There is a risk that clinicians may become overly reliant on AI tools, potentially diminishing their critical thinking and professional judgment. Psychological assessment requires empathy, context, and human understanding—qualities that AI cannot fully replicate.

5. Informed Consent

Patients must be informed about how their data is being used and how AI systems contribute to their assessment. Obtaining informed consent is a fundamental ethical requirement, but it becomes more complex with AI involvement.

6. Accountability and Responsibility

When an AI system makes an incorrect assessment, determining accountability can be challenging. Is the responsibility on the developer, the clinician, or the organization? Clear guidelines are needed to address this issue.

Strategic Responses to Ethical Challenges

To address these challenges, organizations and professionals must adopt strategic approaches that prioritize ethics and responsibility.

1. Strengthening Data Governance

Robust data governance frameworks are essential to protect patient information. This includes implementing encryption, access controls, and regular security audits.

Organizations should also minimize data collection to only what is necessary, reducing the risk of misuse.

2. Ensuring Fairness and Reducing Bias

Developers must use diverse and representative datasets to train AI models. Regular testing and validation should be conducted to identify and mitigate biases.

Involving multidisciplinary teams, including psychologists and ethicists, can help ensure fairness in AI systems.

3. Promoting Transparency and Explainability

AI systems should be designed to provide clear and understandable explanations of their decisions. Explainable AI (XAI) techniques can help bridge the gap between complex algorithms and human understanding.

Transparency builds trust and allows practitioners to make informed decisions.

4. Maintaining Human Oversight

AI should be used as a supportive tool rather than a replacement for human judgment. Clinicians must remain actively involved in the assessment process, using their expertise to interpret AI-generated insights.

Human oversight ensures that ethical considerations and contextual factors are taken into account.

5. Enhancing Ethical Training

Professionals working with AI in psychological assessment should receive training on ethical issues and best practices. This includes understanding the limitations of AI and recognizing potential risks.

Educational programs should integrate AI ethics into psychology and healthcare curricula.

6. Establishing Regulatory Frameworks

Governments and professional bodies must develop clear regulations and guidelines for the use of AI in psychological assessment. These frameworks should address issues such as data privacy, accountability, and transparency.

Standardization can help ensure consistent and ethical practices across the industry.

7. Encouraging Patient Engagement

Patients should be actively involved in the assessment process. Providing clear information about AI tools and obtaining informed consent are essential steps.

Engaging patients fosters trust and empowers them to make informed decisions about their care.

Balancing Innovation and Ethics

The integration of AI in psychological assessment presents a delicate balance between innovation and ethics. While AI offers numerous benefits, it also introduces risks that must be carefully managed.

Organizations must adopt a proactive approach, embedding ethical considerations into every stage of AI development and deployment. This includes continuous monitoring, evaluation, and improvement of AI systems.

Collaboration between technologists, psychologists, policymakers, and patients is crucial for achieving this balance. By working together, stakeholders can create AI systems that are both innovative and ethically sound.

Future Directions

The future of AI in psychological assessment will be shaped by advancements in technology and evolving ethical standards.

Personalized Mental Health Care

AI will enable highly personalized assessments and treatment plans, tailored to individual needs and preferences.

Integration with Wearable Technology

Wearable devices will provide real-time data on physiological and behavioral patterns, enhancing the accuracy of assessments.

Global Accessibility

AI-powered platforms will make psychological assessment more accessible worldwide, particularly in regions with limited mental health resources.

Continuous Ethical Evolution

As AI technology evolves, ethical frameworks must also adapt. Ongoing research and dialogue will be essential to address emerging challenges.

Conclusion

AI integration in psychological assessment has the potential to revolutionize mental health care, offering improved accuracy, efficiency, and accessibility. However, it also raises significant ethical challenges that cannot be ignored.

By implementing strategic responses such as strong data governance, bias mitigation, transparency, and human oversight, organizations can ensure responsible use of AI. Ethical considerations must remain at the core of innovation, guiding the development and application of AI technologies.

Ultimately, the goal is to create a system where AI enhances human capabilities without compromising ethical standards. With careful planning and collaboration, this vision can become a reality.