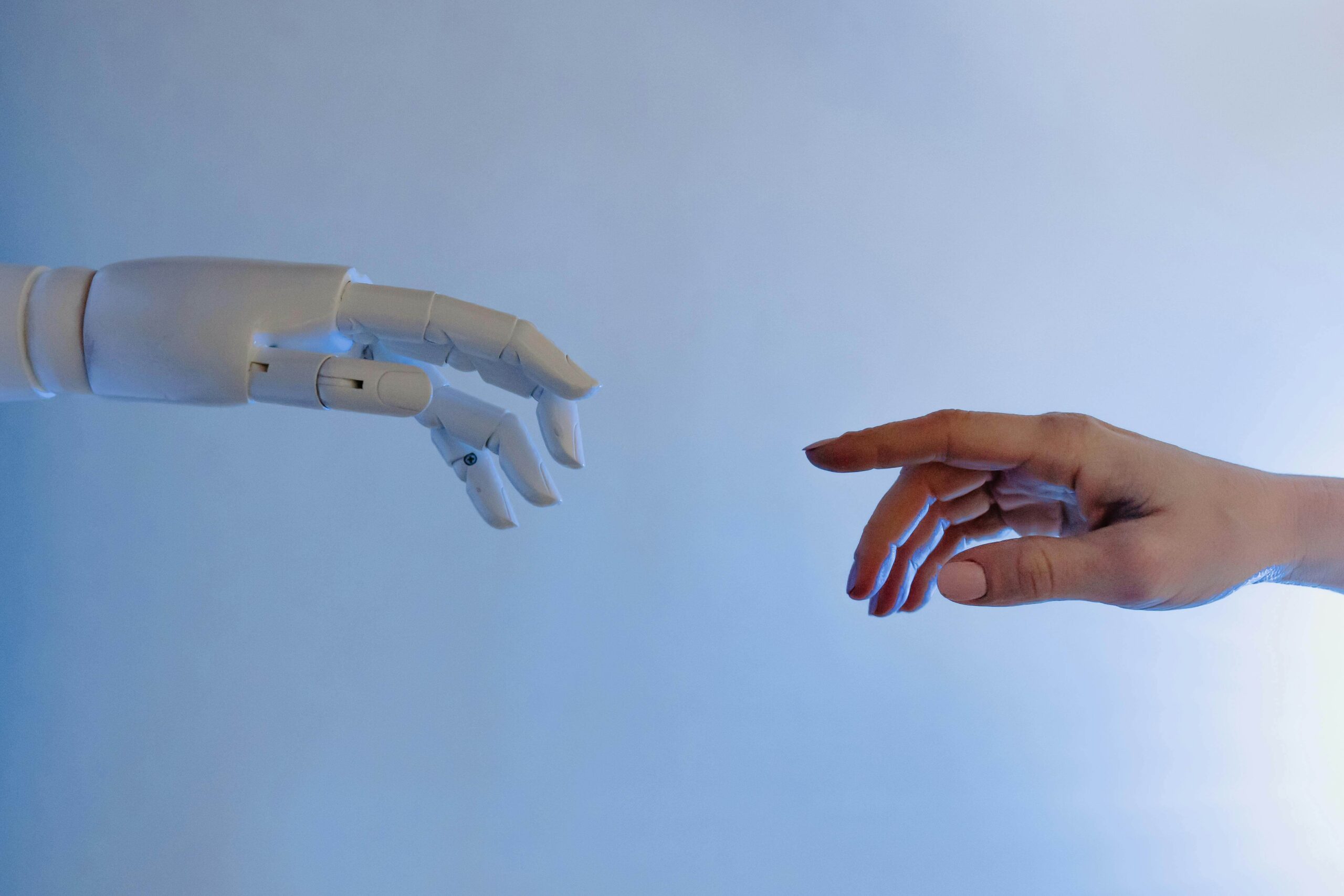

Critical Thinking in AI: In today’s rapidly evolving digital world, Artificial Intelligence (AI) is no longer just a tool—it has become a collaborative partner. From writing and research to business decision-making and creative design, humans are increasingly working alongside AI systems. While this collaboration offers immense benefits, it also introduces a critical requirement: the need for strong human critical thinking.

AI can process vast amounts of data, generate insights, and automate tasks, but it does not “think” in the human sense. It lacks true understanding, judgment, and ethical reasoning. This is where critical thinking becomes essential. In human-AI collaborations, the real value does not come from AI alone, but from how humans interpret, question, and refine AI-generated outputs.

Understanding Human-AI Collaboration

Human-AI collaboration refers to the partnership between people and AI systems to achieve specific goals. Instead of replacing humans, AI is designed to enhance human capabilities.

For example, in business, AI can analyze market trends and suggest strategies, but it is up to human decision-makers to evaluate those suggestions. In education, AI tools can generate summaries or explanations, but students must critically assess whether the information is accurate and meaningful.

This collaboration creates a dynamic where AI provides speed and data processing power, while humans contribute reasoning, context, and judgment.

What Is Critical Thinking?

Critical thinking is the ability to analyze information objectively, question assumptions, evaluate evidence, and make reasoned decisions. It involves skills such as:

-

Asking the right questions

-

Identifying biases

-

Evaluating sources of information

-

Recognizing logical fallacies

-

Making informed judgments

In the context of AI, critical thinking means not accepting AI outputs at face value. Instead, users must assess the reliability, relevance, and implications of the information provided.

Why Critical Thinking Matters More Than Ever

As AI systems become more advanced, their outputs often appear highly convincing. They can generate well-structured responses, detailed analyses, and even creative content. However, this fluency can sometimes create a false sense of accuracy.

AI systems may produce incorrect information, outdated data, or biased interpretations. Without critical thinking, users may unknowingly rely on flawed outputs.

This is particularly important in high-stakes fields such as healthcare, law, finance, and education, where decisions based on incorrect information can have serious consequences.

Critical thinking acts as a safeguard, ensuring that AI is used responsibly and effectively.

The Risk of Over-Reliance on AI

One of the biggest challenges in human-AI collaboration is the risk of over-reliance. When AI tools make tasks easier and faster, users may become less engaged in the thinking process.

For example, a student might rely on AI to generate answers without fully understanding the subject. Similarly, professionals might accept AI-generated reports without verifying the data.

Over time, this can weaken critical thinking skills and reduce the ability to make independent decisions.

To avoid this, users must remain actively involved in the process, treating AI as a support system rather than a replacement for thinking.

Evaluating AI Outputs

A key aspect of critical thinking in AI collaboration is evaluating the outputs generated by AI systems. This involves asking questions such as:

-

Is the information accurate and up to date?

-

What sources might the AI have used?

-

Are there any biases in the response?

-

Does the answer fully address the question?

-

What might be missing or overlooked?

By applying these questions, users can identify potential issues and improve the quality of the final outcome.

Recognizing Bias in AI Systems

AI systems learn from data, and that data often reflects human biases. As a result, AI outputs may unintentionally reinforce stereotypes or present skewed perspectives.

Critical thinking helps users recognize these biases and challenge them. For instance, if an AI-generated response seems one-sided, users can seek additional perspectives or verify the information through other sources.

Being aware of bias is essential for ensuring fairness and inclusivity in AI-driven decisions.

The Role of Human Judgment

AI excels at processing data, but it lacks human judgment. It cannot fully understand context, emotions, or ethical considerations.

Human judgment is especially important in situations that involve moral decisions, cultural sensitivity, or complex problem-solving. For example, an AI system might suggest a cost-effective business strategy, but a human must consider its ethical implications.

Critical thinking allows humans to apply their values and experience to AI-generated insights, ensuring that decisions align with broader goals and principles.

Enhancing Creativity Through Critical Thinking

Interestingly, critical thinking does not limit creativity—it enhances it. When humans critically engage with AI outputs, they can refine ideas, explore new perspectives, and develop more innovative solutions.

For example, a writer using AI-generated content can evaluate and improve the narrative, adding depth and originality. Similarly, designers can use AI suggestions as a starting point and build upon them creatively.

This collaborative process leads to better outcomes than relying solely on AI or human effort alone.

Building Critical Thinking Skills in the AI Era

As AI becomes more integrated into daily life, developing strong critical thinking skills is essential. Some ways to strengthen these skills include:

Practicing skepticism – Question information rather than accepting it immediately.

Cross-checking sources – Verify AI outputs using reliable references.

Engaging in discussions – Explore different viewpoints to gain a deeper understanding.

Reflecting on decisions – Analyze how and why certain conclusions are reached.

Education systems and workplaces must also adapt by emphasizing critical thinking as a core skill in the AI era.

Ethical Responsibility in Human-AI Collaboration

With the growing use of AI, ethical responsibility becomes increasingly important. Users must ensure that AI is used in ways that are fair, transparent, and beneficial.

Critical thinking plays a key role in identifying ethical concerns, such as misinformation, data privacy issues, and potential misuse of AI technology.

By thinking critically, individuals can make responsible choices and contribute to the ethical development of AI systems.

The Future of Human-AI Collaboration

The future of human-AI collaboration will depend on how effectively humans can work alongside intelligent systems. As AI continues to evolve, the need for critical thinking will only increase.

Rather than competing with AI, humans must focus on developing skills that complement it—such as reasoning, creativity, and ethical judgment.

Organizations that encourage critical thinking will be better equipped to leverage AI’s potential while minimizing risks.

Conclusion

Human-AI collaboration represents a powerful partnership that can transform industries, improve productivity, and unlock new possibilities. However, the success of this collaboration depends on one crucial factor: critical thinking.

AI can provide information and suggestions, but it is up to humans to evaluate, interpret, and apply that information wisely. Without critical thinking, the benefits of AI may be overshadowed by errors, biases, and poor decision-making.

By strengthening critical thinking skills, individuals can ensure that AI remains a tool for empowerment rather than dependency. In the end, the true potential of AI is realized not when it replaces human thinking, but when it enhances it.