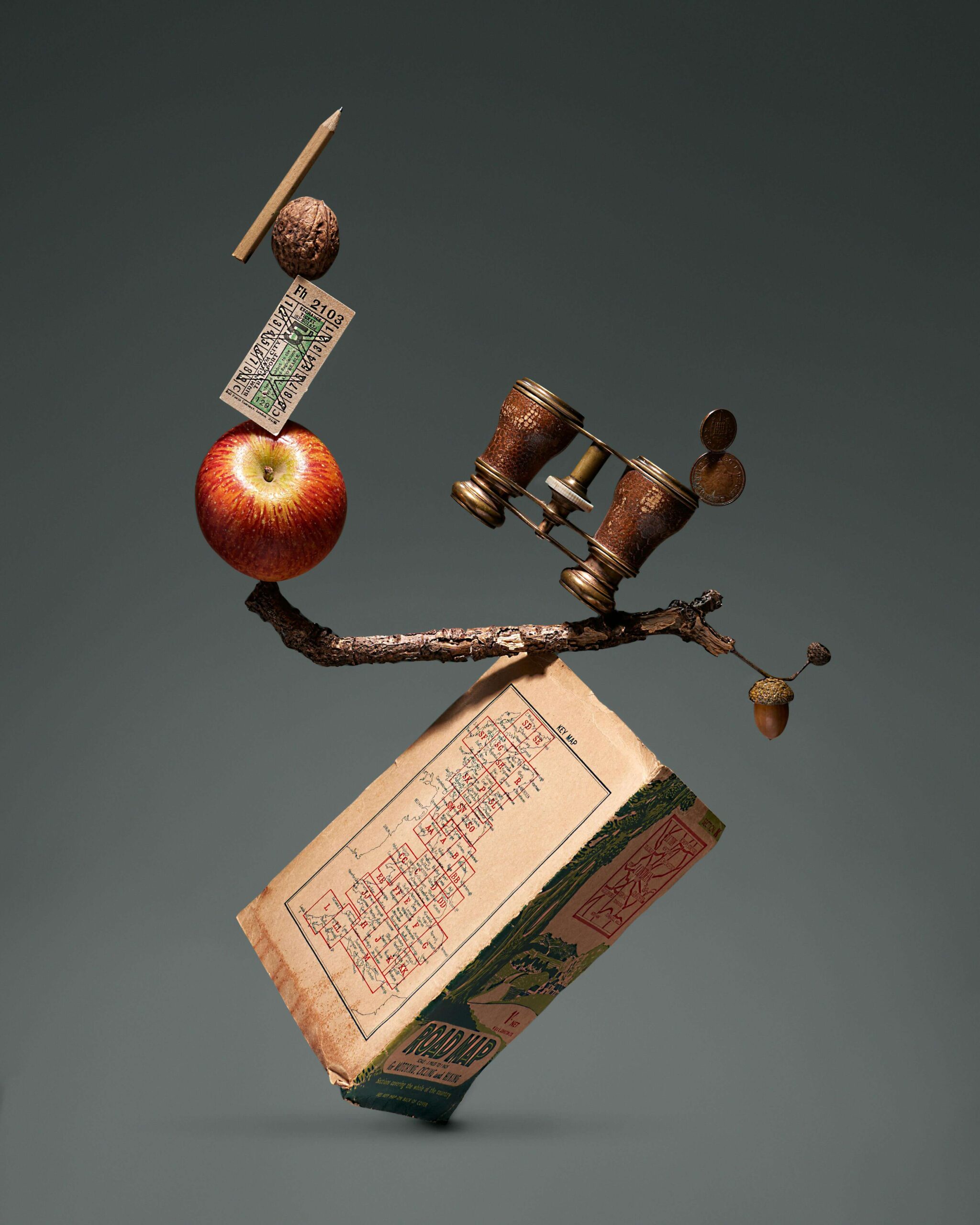

Human Accountability in AI Bias: Artificial Intelligence (AI) has rapidly moved from being a futuristic concept to a powerful force shaping modern life. From healthcare diagnostics to hiring decisions and criminal justice systems, AI is now deeply embedded in processes that directly affect human lives. While these systems promise efficiency and objectivity, a troubling reality persists: AI can be biased. Even more concerning is that this bias is often a reflection of human choices, not machine autonomy. In the pursuit of technological advancement, we may be seeing the goal—innovation and efficiency—but missing the truth about accountability.

The Illusion of Objectivity

One of the most widespread misconceptions about AI is that it is inherently neutral. Because machines operate on algorithms and data, many assume their decisions are free from human prejudice. However, AI systems are only as unbiased as the data they are trained on and the humans who design them.

For example, if an AI hiring tool is trained on historical data from a company that favored male candidates, the system may learn to replicate this pattern. The algorithm does not “intend” to discriminate, but it mirrors existing inequalities. In such cases, blaming the AI alone misses the deeper issue: the human decisions that shaped its learning process.

Where Bias Begins

AI bias can emerge at multiple stages:

- Data Collection: If the data used to train AI is incomplete or skewed, the system inherits those flaws. For instance, facial recognition systems have historically performed poorly on darker skin tones because of underrepresentation in training datasets.

- Algorithm Design: Developers make choices about which variables to prioritize. These decisions, even when unintentional, can introduce bias.

- Interpretation of Results: Humans interpret AI outputs, and their own biases can influence how results are used.

In each of these stages, human involvement is central. AI does not operate in isolation; it reflects human judgment, values, and limitations.

The Accountability Gap

One of the biggest challenges in addressing AI bias is the lack of clear accountability. When an AI system makes a harmful decision, who is responsible? Is it the developer who wrote the code, the company that deployed it, or the data scientists who trained it?

This ambiguity often leads to a dangerous situation where responsibility is diffused. Organizations may blame the technology, while developers may argue they were following instructions or using available data. Meanwhile, the individuals affected by biased decisions are left without recourse.

The concept of “algorithmic accountability” is still evolving, but it is clear that assigning responsibility is essential. Without accountability, there is little incentive to identify and correct bias.

Real-World Consequences

AI bias is not just a technical issue—it has real-world consequences that can reinforce inequality and harm vulnerable populations.

- Healthcare: Biased algorithms may misdiagnose or overlook certain groups, leading to unequal treatment.

- Criminal Justice: Risk assessment tools can disproportionately label certain communities as high-risk, influencing sentencing decisions.

- Employment: Automated hiring systems may exclude qualified candidates based on biased patterns in data.

These examples highlight that AI bias is not merely a theoretical concern; it directly impacts people’s lives, opportunities, and rights.

Human Responsibility: The Core of the Issue

At its core, AI bias is a human problem. While technology can amplify bias, it does not create it independently. The values, assumptions, and decisions of humans are embedded in every AI system.

Recognizing this shifts the conversation from blaming machines to examining human responsibility. It requires acknowledging that:

- Bias in AI reflects societal inequalities.

- Developers and organizations have a duty to identify and mitigate bias.

- Ethical considerations must be integrated into technological development.

This perspective does not diminish the importance of technical solutions but emphasizes that ethical responsibility cannot be outsourced to machines.

The Role of Transparency

Transparency is a critical step toward accountability. Many AI systems operate as “black boxes,” where even developers struggle to explain how decisions are made. This lack of transparency makes it difficult to identify bias and hold anyone accountable.

To address this, organizations must prioritize:

- Explainable AI: Systems that provide clear reasoning for their decisions.

- Open Data Practices: Transparency about the data used to train models.

- Audit Mechanisms: Regular reviews to detect and correct bias.

Transparency not only builds trust but also enables meaningful oversight.

Ethical Design and Governance

Creating fair AI systems requires a proactive approach to ethics. This involves embedding ethical considerations into every stage of development rather than treating them as an afterthought.

Key strategies include:

- Diverse Development Teams: Including people from different backgrounds can help identify potential biases early.

- Bias Testing: Regularly testing AI systems for discriminatory outcomes.

- Regulatory Frameworks: Governments and institutions must establish guidelines to ensure accountability.

Ethical AI is not just about avoiding harm; it is about actively promoting fairness and inclusivity.

The Danger of Over-Reliance

Another important aspect of accountability is recognizing the limits of AI. Over-reliance on automated systems can lead to a false sense of security, where human judgment is sidelined.

Humans must remain actively involved in decision-making processes, especially in high-stakes scenarios. AI should be seen as a tool to assist, not replace, human judgment. Maintaining this balance is essential to prevent blind trust in flawed systems.

Toward a Culture of Accountability

Addressing AI bias requires more than technical fixes; it demands a cultural shift. Organizations must foster a sense of responsibility among all stakeholders, from developers to executives.

This includes:

- Encouraging ethical reflection in technological development.

- Holding individuals and organizations accountable for outcomes.

- Prioritizing fairness alongside efficiency and innovation.

By creating a culture of accountability, we can ensure that AI serves society rather than reinforcing existing inequalities.

Conclusion

The rapid advancement of AI presents both opportunities and challenges. While these systems have the potential to improve efficiency and decision-making, they also risk perpetuating bias if not carefully managed.

“Seeing the goal, missing the truth” captures the essence of this dilemma. In our pursuit of technological progress, we must not overlook the human responsibility at the heart of AI systems. Bias in AI is not an inevitable flaw of technology but a reflection of human choices.

To build fair and trustworthy AI, we must move beyond the illusion of machine neutrality and embrace accountability at every level. Only then can we ensure that AI fulfills its promise without compromising justice, equality, and human dignity.