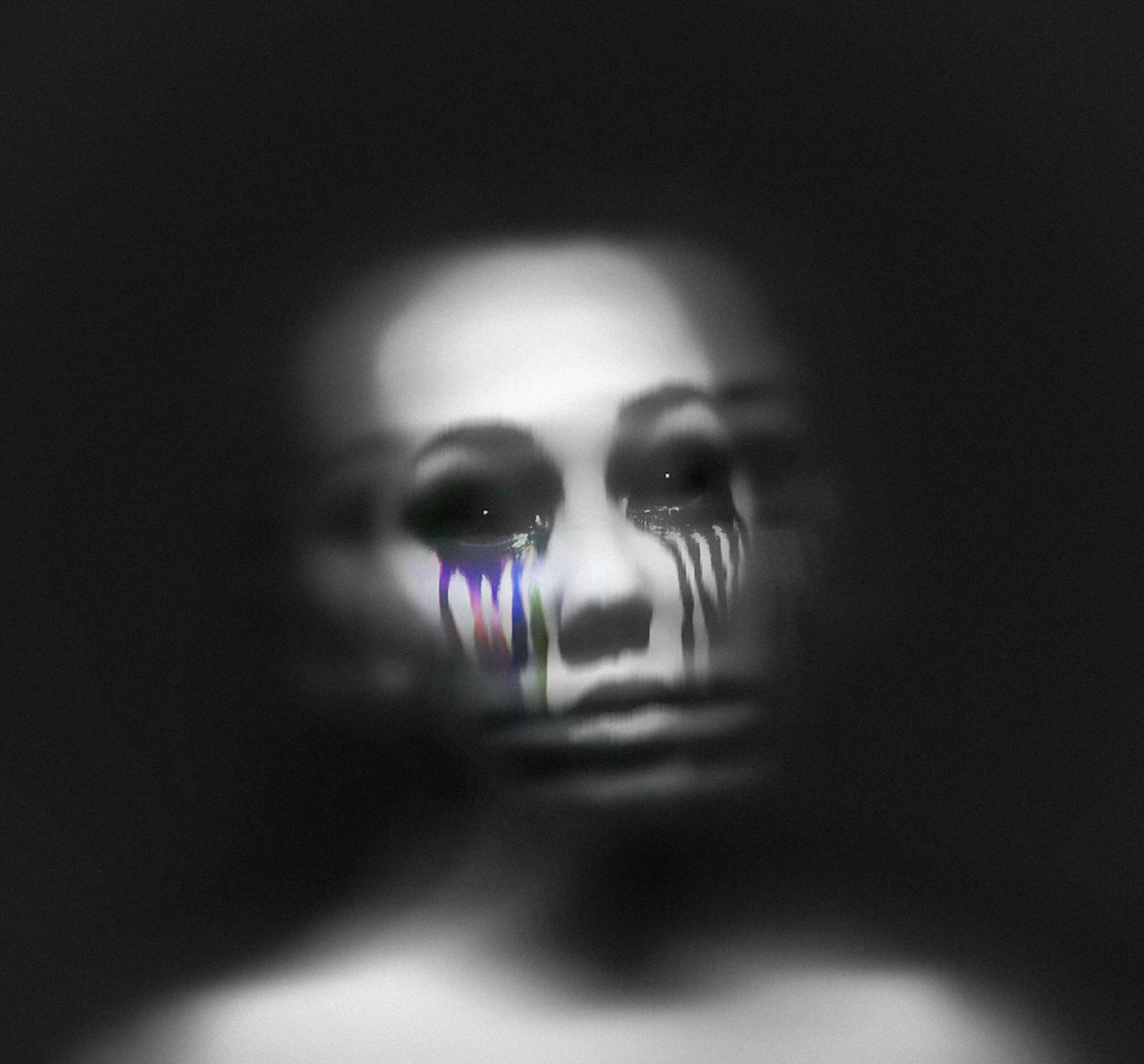

AI Hallucinations: Artificial Intelligence has rapidly become one of the most transformative technologies of the 21st century. From chatbots and digital assistants to automated writing tools and recommendation engines, AI systems are increasingly shaping the way we interact with information. Yet, alongside the excitement and innovation, a curious phenomenon has emerged—AI hallucinations.

The term might sound dramatic, even humorous, but it describes a real challenge in modern AI systems. When an AI model generates information that appears convincing but is actually false, fabricated, or misleading, it is said to be “hallucinating.” This raises an important question: Are we witnessing a flaw in technology, or simply misunderstanding how AI truly works?

Understanding the mirage of AI hallucinations is essential as we rely more heavily on AI tools in education, journalism, business, and everyday decision-making.

What Are AI Hallucinations?

AI hallucinations occur when an artificial intelligence system produces incorrect or entirely fabricated information while presenting it as factual. Unlike a simple mistake or typo, hallucinations often appear highly confident and believable.

For example, an AI system might:

-

Cite a research paper that does not exist

-

Provide historical facts that are incorrect

-

Generate quotes from famous figures that were never spoken

-

Offer technical explanations that sound logical but contain errors

This phenomenon is most common in large language models that generate human-like text by predicting patterns from massive datasets. Because the system’s goal is to produce coherent and fluent language, it sometimes prioritizes plausibility over accuracy.

In simple terms, AI doesn’t truly know things—it predicts what sounds right based on patterns it has learned.

Why Do AI Hallucinations Happen?

AI hallucinations are not random glitches. They stem from the way modern AI models are designed and trained.

1. Pattern Prediction Instead of True Understanding

Most AI systems operate using pattern recognition. They analyze enormous amounts of text data and learn which words or phrases typically follow others.

When asked a question, the model predicts the most likely sequence of words rather than verifying factual accuracy. This means the response may sound correct even when it is not.

2. Incomplete or Biased Training Data

AI models are trained on large datasets gathered from books, websites, and articles across the internet. If the training data contains errors, outdated information, or biases, the AI may reproduce them.

Additionally, the model might attempt to fill gaps in its knowledge by generating information that statistically fits the context.

3. Pressure to Provide an Answer

Unlike humans, who might say “I don’t know,” AI models are often designed to always generate a response. This design encourages the system to produce an answer even when it lacks reliable information.

The result can be a confident but inaccurate output.

4. Complexity of Language Models

Modern AI models are incredibly complex neural networks with billions or even trillions of parameters. While they can produce impressive results, their internal decision-making processes are difficult to interpret.

This complexity sometimes leads to unpredictable outputs, including hallucinations.

Real-World Examples of AI Hallucinations

AI hallucinations have already appeared in several high-profile situations.

Academic and Research Errors

Some researchers have discovered that AI tools occasionally generate fake academic citations. These references appear credible but cannot be found in real journals or databases.

Legal and Professional Risks

In certain legal cases, lawyers using AI-generated content unknowingly submitted fabricated case citations. Courts later discovered that the cases never existed.

Media and Journalism

Journalists experimenting with AI-generated news summaries have found instances where key facts were distorted or invented.

These examples highlight why human oversight remains critical when using AI-generated information.

Why AI Hallucinations Matter

At first glance, AI hallucinations might seem like harmless mistakes. However, their implications are far more serious.

1. Erosion of Trust

If AI systems regularly produce unreliable information, users may begin to distrust not only AI tools but also legitimate digital content.

Trust is the foundation of information ecosystems, and hallucinations can undermine it.

2. Spread of Misinformation

AI-generated misinformation can spread rapidly, especially when shared through social media or automated platforms.

Inaccurate content may appear authoritative, making it difficult for users to distinguish truth from fiction.

3. Professional Consequences

In fields like healthcare, law, finance, and education, incorrect AI-generated information could lead to poor decisions or serious consequences.

Professionals must treat AI as an assistant rather than a source of unquestionable truth.

4. Ethical Concerns

The potential for AI to generate convincing but false narratives raises ethical questions about accountability, transparency, and responsible use of technology.

Are AI Hallucinations a Bug or a Feature?

Interestingly, some experts argue that hallucinations are not entirely negative. In creative fields, the ability of AI to generate unexpected ideas can be valuable.

For instance:

-

Writers may use AI to brainstorm unique story concepts.

-

Designers might explore unconventional artistic prompts.

-

Musicians could experiment with new lyrical combinations.

In these contexts, AI’s “imagination” can become a creative tool.

However, in factual or professional contexts, hallucinations remain a significant problem that must be addressed.

How Developers Are Reducing AI Hallucinations

Technology companies and AI researchers are actively working to reduce hallucinations and improve reliability.

1. Reinforcement Learning

Developers train AI systems using human feedback to encourage accurate and helpful responses while discouraging misleading ones.

2. Retrieval-Augmented Generation

Some AI systems now connect to verified databases or search engines to retrieve factual information before generating answers.

This approach helps ground responses in real data rather than pure prediction.

3. Transparency and Uncertainty Indicators

Future AI systems may provide confidence scores or indicate when information might be uncertain, helping users evaluate reliability.

4. Better Training Data

Improving the quality, diversity, and accuracy of training datasets can significantly reduce hallucinations.

What Users Should Do

While developers work on technical solutions, users also play an important role in preventing the spread of AI-generated misinformation.

Here are some practical tips:

-

Verify important information using reliable sources.

-

Avoid relying solely on AI for critical decisions.

-

Use AI as a brainstorming tool rather than a fact authority.

-

Cross-check citations and statistics.

By maintaining a healthy level of skepticism, users can benefit from AI while minimizing risks.

The Future of AI Reliability

AI technology is still evolving rapidly. Over time, improvements in architecture, data quality, and training methods will likely reduce hallucinations.

Some experts believe future AI systems will combine language models with structured knowledge bases, enabling them to verify facts before presenting information.

Others envision hybrid systems where AI collaborates closely with human experts to ensure accuracy and accountability.

Regardless of the approach, one thing is clear: the challenge of AI hallucinations is pushing researchers to build more trustworthy and transparent AI systems.

Conclusion

The phenomenon of AI hallucinations reveals both the power and limitations of modern artificial intelligence. While AI can generate remarkably human-like text and ideas, it does not possess genuine understanding or awareness.

Instead, it operates by predicting patterns—sometimes producing brilliant insights and other times creating convincing illusions.

Rather than viewing AI hallucinations as a reason to abandon the technology, we should see them as an opportunity to improve it. With better design, stronger oversight, and responsible use, AI can continue to evolve into a powerful tool for knowledge, creativity, and innovation.

In the end, the question isn’t whether AI sometimes “hallucinates.” The real question is how we, as users and creators of technology, learn to navigate the mirage while building a future where artificial intelligence enhances human intelligence rather than replacing it.